AI services are becoming more and more accessible to developers than ever before. Salesforce acquired Metamind last year and made some big announcements at Dreamforce 2016. Like many developers, i was keen to find out about its API. The answer at the time was “check back with us next year!”.

With Spring’17 that question has been answered. At least thus far as regards to image recognition, with the availability of Salesforce Einstein Predictive Vision Service (Pilot). The pilot is open to the public and is free to signup.

With Spring’17 that question has been answered. At least thus far as regards to image recognition, with the availability of Salesforce Einstein Predictive Vision Service (Pilot). The pilot is open to the public and is free to signup.

True AI consists of recognition, be that visual or spoken, performing actions and the final most critical peace, learning. This blog explores the spoken and visual recognition peace further, with the added help of Flow for performing practically any action you can envision!

You may recall a blog from last year relating to integrating Salesforce with Amazon Echo. To explore the new Einstein API, I decided to leverage that work further. In order to trigger recognition of my pictures from Alexa. Also the Salesforce Flow usage enabled easy extensibility via custom Apex Actions. Thus the Einstein Apex Action was born! After a small bit of code and some configuration i had a working voice activated image recognition demo up and running.

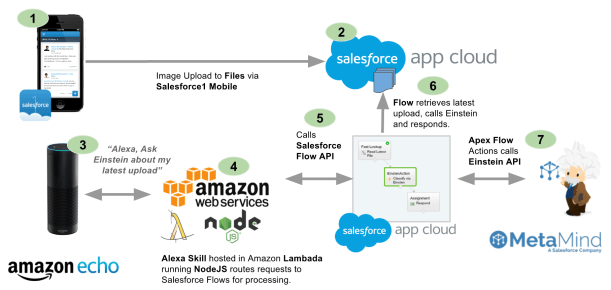

The following diagram breaks down what just happened in the video above. Followed by a deeper walk through of the Predictive Vision Service and how to call it.

- Using Salesforce1 Mobile app I uploaded an image using the Files feature.

- Salesforce stores this in the ContentVersion object for later querying (step 6).

- Using the Alexa skill, called Einstein, i was able to “Ask Einstein about my photo”

- This NodeJS skill runs on Amazon and simply routes requests to Salesforce Flow

- Spoken terms are passed through to a named Flow via the Flow API.

- The Flow is simple in this case, it queries the ContentVersion for the latest upload.

- The Flow then calls the Einstein Apex Action which in turn calls the Einstein REST API via Apex (more on this later). Finally a Flow assignment takes the resulting prediction of what the images is actually of, and uses it to build a spoken response.

Standard Example: The above example is exposing the Einstein API in an Apex Action, this is purely to integrate with the Amazon Echo use case. The pilot documentation walks you through an standalone Apex and Visualforce example to get you started.

How does theEinstein Predictive Vision Service API work?

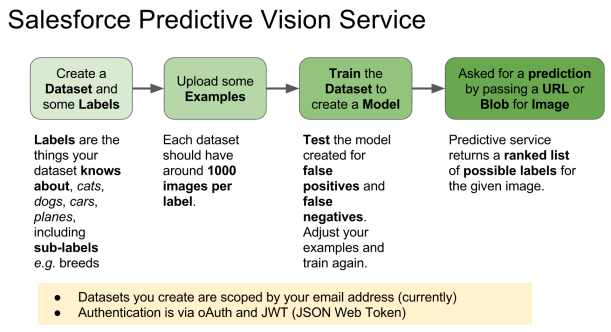

The service introduces a few new terms to get your head round. Firstly a dataset is a named container for the types of images (labels) you want to recognise. The demo above uses a predefined dataset and model. A model is the output from the process of taking examples of each of your data sets labels and processing them (training). Initiating this process is pretty easy, you just make a REST API call with your dataset ID. All the recognition magic is behind the scenes, you just poll for when its done. All you have to do is test the model with other images. The service returns ranked predictions (using the datasets labels) on what it thinks your picture is of. When i ran the pictures above of my family dogs, for the first time i was pretty impressed that it detected the breeds.

The service introduces a few new terms to get your head round. Firstly a dataset is a named container for the types of images (labels) you want to recognise. The demo above uses a predefined dataset and model. A model is the output from the process of taking examples of each of your data sets labels and processing them (training). Initiating this process is pretty easy, you just make a REST API call with your dataset ID. All the recognition magic is behind the scenes, you just poll for when its done. All you have to do is test the model with other images. The service returns ranked predictions (using the datasets labels) on what it thinks your picture is of. When i ran the pictures above of my family dogs, for the first time i was pretty impressed that it detected the breeds.

While quite fiddly at times, it is also well worth the walking through how to setup your own image datasets and training to get a hands on example of the above.

How do i call the Einstein API from Apex?

Salesforce saved me the trouble of wrapping the REST API in Apex and have started an Apex wrapper here in this GitHub repo. When you signup you get private key file you have to upload into Salesforce to authenticate the calls. Currently the private key file the pilot gives you seems to be scoped by your org users associated email address.

public with sharing class EinsteinAction {

public class Prediction {

@InvocableVariable

public String label;

@InvocableVariable

public Double probability;

}

@InvocableMethod(label='Classify the given files' description='Calls the Einsten API to classify the given ContentVersion files.')

public static List<EinsteinAction.Prediction> classifyFiles(List<ID> contentVersionIds) {

String access_token = new VisionController().getAccessToken();

ContentVersion content = [SELECT Title,VersionData FROM ContentVersion where Id in :contentVersionIds LIMIT 1];

List<EinsteinAction.Prediction> predictions = new List<EinsteinAction.Prediction>();

for(Vision.Prediction vp : Vision.predictBlob(content.VersionData, access_token, 'GeneralImageClassifier')) {

EinsteinAction.Prediction p = new EinsteinAction.Prediction();

p.label = vp.label;

p.probability = vp.probability;

predictions.add(p);

break; // Just take the most probable

}

return predictions;

}

}

NOTE: The above method is only handling the first file passed in the parameter list, the minimum needed for this demo. To bulkify you can remove the limit in the SOQL and ideally put the file ID back in the response. It might also be useful to expose the other predictions and not just the first one.

The VisionController and Vision Apex classes from the GitHub repo are used in the above code. It looks like the repo is still very much WIP so i would expect the API to change a bit. They also assume that you have followed the standalone example tutorial here.

Summary

This initial API has made it pretty easy to access a key part of AI with what is essentially only a handful of simple REST API calls. I’m looking forward to seeing where this goes and where Salesforce goes next with future AI services.

One thing that simplifies the process is hosting your skill code through the

One thing that simplifies the process is hosting your skill code through the

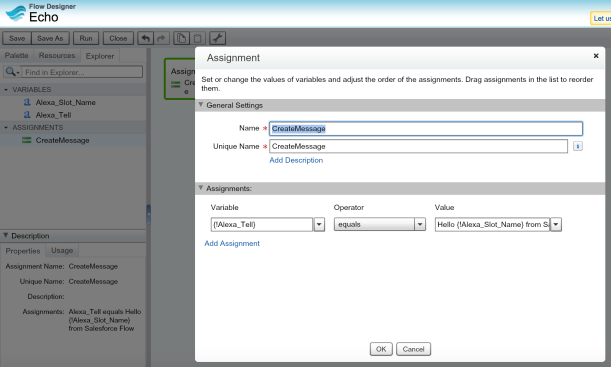

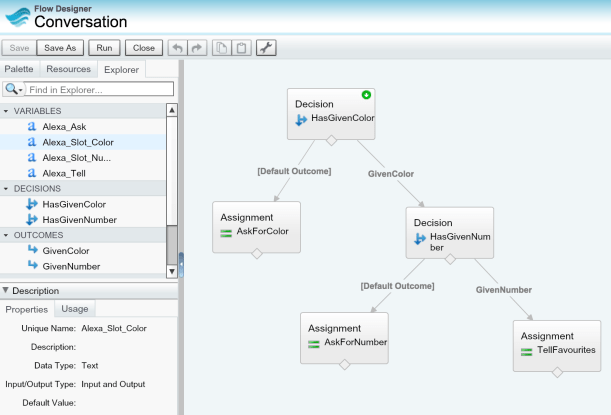

To code my Skill I went with NodeJS, as i had not done a lot of coding in it and wanted to challenge myself. The other challenge i set myself was to integrate in a generic and extensible way with Salesforce. Thus i wanted to incorporate my old friend Flow!

To code my Skill I went with NodeJS, as i had not done a lot of coding in it and wanted to challenge myself. The other challenge i set myself was to integrate in a generic and extensible way with Salesforce. Thus i wanted to incorporate my old friend Flow!