I’m proud to announce the third edition of my book has now been released. Back in March this year I took the plunge start updates to many key areas and add two brand new chapters. Between the 2 years and 8 months since the last edition there has been several platform releases and an increasing number of new features and innovations that made this the biggest update ever! This edition also embraces the platforms rebranding to Lightning, hence the book is now entitled Salesforce Lightning Platform Enterprise Architecture.

I’m proud to announce the third edition of my book has now been released. Back in March this year I took the plunge start updates to many key areas and add two brand new chapters. Between the 2 years and 8 months since the last edition there has been several platform releases and an increasing number of new features and innovations that made this the biggest update ever! This edition also embraces the platforms rebranding to Lightning, hence the book is now entitled Salesforce Lightning Platform Enterprise Architecture.

You can purchase this book direct from Packt or of course from Amazon among other sellers. As is the case every year Salesforce events such as Dreamforce and TrailheaDX this book and many other awesome publications will be on sale. Here are some of the key update highlights:

- Automation and Tooling Updates

Throughout the book SFDX CLI, Visual Studio Code and 2nd Generation Packaging are leverage. While the whole book is certainly larger, certain chapters of the book actually reduced in size as steps previously reflecting clicks where replaced with CLI commands! At one point in time I was quite a master in Ant Scripts and Marcos, they have also given way to built in SFDX commands. - User Interface Updates

Lightning Web Components is a relative new kid on the block, but benefits greatly from its standards compliance, meaning there is plenty of fun to go around exploring industry tools like Jest in the Unit Testing chapter. All of the books components have been re-written to the Web Component standard. - Big Data and Async Programming

Big data was once a future concern for new products, these days it is very much a concern from the very start. The book covers Big Objects and Platform Events more extensibility with worked examples, including ingest and calculations driven by Platform Events and Async Apex Triggers. Event Driven Architecture is something every Lightning developer should be embracing as the platform continues to evolve around more and more standard platforms and features that leverage them. - Integration and Extensibility

A particularly enjoyed exploring the use of Platform Events as another means by which you can expose API’s from your packages to support more scalable invocation of your logic and asynchronous plugins. - External Integrations and AI

External integrations with other cloud services are a key part to application development and also the implementation of your solution, thus one of two brand new chapters focuses on Connected Apps, Named Credentials, External Services and External Objects, with worked examples of existing services or sample Heroku based services. Einstein has an ever growing surface area across Salesforce products and the platform. While this topic alone is worth an entire book, I took the time in the second new chapter, to enumerate Einstein from the perspective of the developer and customer configurations. The Formula1 motor racing theme continued with the ingest of historic race data that you can run AI over. - Other Updates

Among other updates is a fairly extensive update to the CI/CD chapter which still covers Jenkins, but leverages the new Jenkins Pipeline feature to integrate SFDX CLI. The Unit Testing chapter has also been extended with further thoughts on unit vs integration testing and a focus on Lightening Web Component testing.

The above is just highlights for this third edition, you can see a full table of contents here. A massive thanks to everyone involving for providing the inspiration and support for making this third edition happen! Enjoy!

This was my first TrailheaDX and what an event it was! With my Field Guide in hand i set out into the wilderness! In this blog i’ll share some of my highlights, thoughts and links to the latest resources. Many of the newly announced things you can actually get your hands on

This was my first TrailheaDX and what an event it was! With my Field Guide in hand i set out into the wilderness! In this blog i’ll share some of my highlights, thoughts and links to the latest resources. Many of the newly announced things you can actually get your hands on  With

With

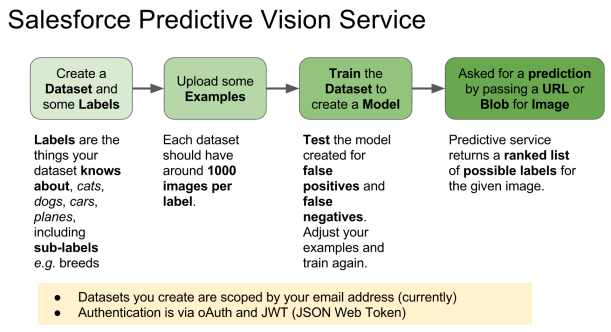

The service introduces a few new terms to get your head round. Firstly a dataset is a named container for the types of images (labels) you want to recognise. The

The service introduces a few new terms to get your head round. Firstly a dataset is a named container for the types of images (labels) you want to recognise. The