In my last blog I focused on getting the Lego robot to understand a selection of commands given to it via posts I sent to its Chatter persona via Salesforce’s Chatter mobile app. In return it gave basic confirmations (as post commonts) as having executed the commands.

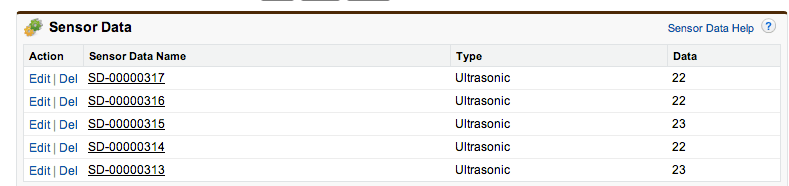

This time I wanted to explore the sensors that came with the kit. And ways in which I can push the data from those sensors into Force.com for further processing and analytics. Eventually enabling dynamic adaption of the robot through a combination of Apex code running in the Cloud as well as the NXC code running within the robot.

Read more and watch the video here…