Batch Apex has been around on the platform for a while now, but I think its fair to say there is still a lot of mystery around it and with that a few baked in assumptions. One such assumption I see being made is that its driven by the database, specifically the records within the database determine the work to be done.

As such if you have some work you need to get done that won’t fit in the standard governors and its not immediately database driven, Batch Apex may get overlooked in favour of @future which on the surface feels like a better fit as its design is not database linked in anyway . Your code is just an annotation away to getting the addition power it needs! So why bother with the complexities of Batch Apex?

As such if you have some work you need to get done that won’t fit in the standard governors and its not immediately database driven, Batch Apex may get overlooked in favour of @future which on the surface feels like a better fit as its design is not database linked in anyway . Your code is just an annotation away to getting the addition power it needs! So why bother with the complexities of Batch Apex?

Well for starters, Batch Apex gives you an ID to trace the work being done and thus the key to improving the user experience while the user waits. Secondly, if any of your parameters are lists or arrays to such methods, your already having to consider again scalability. Yes, you say, but its more fiddly than @future isn’t it?

In this blog I’m going to explore a cool feature of the Batch Apex that often gets overlooked. Using it to implement a worker pattern giving you the kind of usability @future offers with the additional scalability and traceability of Batch Apex without all the work. If your not interested in the background, feel free to skip to the Batch Worker section below!

IMPORTANT NOTE: The alternative approach described here is not designed as a replacement to using Batch Apex against the database using QueryLocator. Using QueryLocator gives access to 50m records, where as the Iterator usage only 50k. Thus the use cases for the Batch Worker are more aligned with smaller jobs perhaps driven by end user selections or stitching together complex chunks of work together.

Well I didn’t know that! (#WIDKT)

First lets review something you may not have realised about implementing Batch Apex. The start method can return either a QueryLocator or something called Iterable. You can implement your own iterators, but what is actually not that clear is that Apex collections/lists implement Iterator by default!

Iterable<String> i = new List<String> { 'A', 'B', 'C' };

With this knowledge, implementing Batch Apex to iterate over a list is now as simple as this…

public with sharing class SimpleBatchApex implements Database.Batchable<String>

{

public Iterable<String> start(Database.BatchableContext BC)

{

return new List<String> { 'Do something', 'Do something else', 'And something more' };

}

public void execute(Database.BatchableContext info, List<String> strings)

{

// Do something really expensive with the string!

String myString = strings[0];

}

public void finish(Database.BatchableContext info) { }

}

// Process the String's one by one each with its own governor context

Id jobId = Database.executeBatch(new SimpleBatchApex(), 1);

The second parameter of the Database.executeBatch method is used to determine how many items from the list are pass to each execute method invocation made by the platform. To get the maximum governors per item and match that of a single @future call, this is set 1. We can also implement Batch Apex with a generic data type know as Object. Which allows you to process different types or actions in one job, more about this later.

public with sharing class GenericBatchApex implements Database.Batchable<Object>

{

public Iterable<Object> start(Database.BatchableContext BC) { }

public void execute(Database.BatchableContext info, List<Object> listOfAnything) { }

public void finish(Database.BatchableContext info) { }

}

A BatchWorker Base Class

The above simplifications are good, but I wanted to further model the type of flexibility @future gives without dealing with the Batch Apex mechanics each time. In designing the BatchWorker base class used in this blog i wanted to make its use as easy as possible. I’m a big fan of the fluent API model and so if you look closely you’ll see elements of that here as well. You can view the full source code for the base class here, its quite a small class though, extending the concepts above to make a more generic Batch Apex implementation.

First lets take another look at the string example above, but this time using the BatchWorker base class.

public with sharing class MyStringWorker extends BatchWorker

{

public override void doWork(Object work)

{

// Do something really expensive with the string!

String myString = (String) work;

}

}

// Process the String's one by one each with its own governor context

Id jobId =

new MyStringWorker()

.addWork('Do something')

.addWork('Do something else')

.addWork('And something more')

.run()

.BatchJobId;

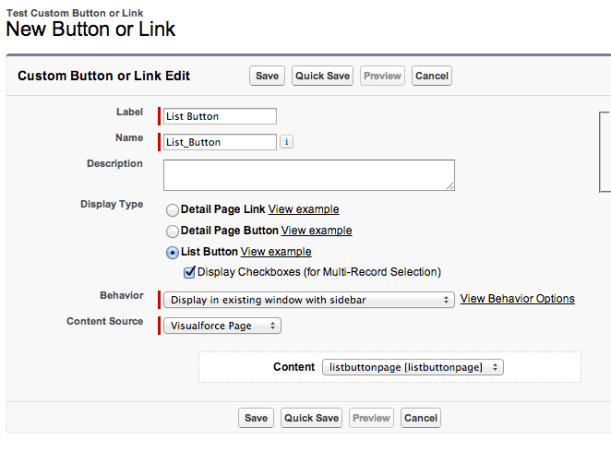

Clearly not everything is as simple as passing a few strings, after all @future methods can take parameters of varying types. The following is a more complex example showing a ProjectWorker class. Imagine this is part of a Visualforce controller method where the user is presented a selection of projects to process with a date range.

// Create worker to process the project selection ProjectWorker projectWorker = new ProjectWorker(); // Add the work to the project worker for(SelectedProject selectedProject : selectedProjects) projectWorker.addWork(startDate, endDate, selectedProject.projectId); // Start the workder and retain the job Id to provide feedback to the user Id jobId = projectWorker.run().BatchJobId;

Here is how the ProjectWorker class has been implemented, once again it extends the BatchWorker class. But this time it provides its own addWork method which takes the parameters as you would normally describe them. Then internally wraps them up in a worker data class. The caller of the class, as you’ve seen above is is not aware of this.

public with sharing class ProjectWorker extends BatchWorker

{

public ProjectWorker addWork(Date startDate, Date endDate, Id projectId)

{

// Construct a worker object to wrap the parameters

return (ProjectWorker) super.addWork(new ProjectWork(startDate, endDate, projectId));

}

public override void doWork(Object work)

{

// Parameters

ProjectWork projectWork = (ProjectWork) work;

Date startDate = projectWork.startDate;

Date endDate = projectWork.endDate;

Id projectId = projectWork.projectId;

// Do the work

// ...

}

private class ProjectWork

{

public ProjectWork(Date startDate, Date endDate, Id projectId)

{

this.startDate = startDate;

this.endDate = endDate;

this.projectId = projectId;

}

public Date startDate;

public Date endDate;

public Id projectId;

}

}

As a final example, recall the fact that Batch Apex can process a list of generic data types. The BatchProcess base class uses this to permit the varied implementations above. It can also be used to create a worker class that can do more than one thing. The equivalent of implementing two @future methods, accept that its managed as one job.

public with sharing class ProjectMultiWorker extends BatchWorker

{

// ...

public override void doWork(Object work)

{

if(work instanceof CalculateCostsWork)

{

CalculateCostsWork calculateCostsWork = (CalculateCostsWork) work;

// Do work

// ...

}

else if(work instanceof BillingGenerationWork)

{

BillingGenerationWork billingGenerationWork = (BillingGenerationWork) work;

// Do work

// ...

}

}

}

// Process the selected Project

Id jobId =

new ProjectMultiWorker()

.addWorkCalculateCosts(System.today(), selectedProjectId)

.addWorkBillingGeneration(System.today(), selectedProjectId, selectedAccountId)

.run()

.BatchJobId;

Summary

Hopefully I’ve provided some insight into new ways to access the power and scalability of Batch Apex for use cases which you may not have previously considered or perhaps used less flexible @future annotation. Keep in mind that using Batch Apex with Iterators does reduce the number of items it can process to 50k, as apposed to the 50m when using database query locator. At the end of the day if you have more than 50k work items, your probably wanting to go down the database driven route anyway. I’ve shared all the code used in this article and some I’ve not shown in this Gist.

Post Credits

Finally, I’d like to give a nod to an past work associate of mine, Tony Scott, who has taken this type of approach down a similar path, but added process control semantics around it. Check out his blog here!